How It Works

The Oracle prediction system is a multi-agent architecture that generates probabilistic forecasts, measures their quality, learns from failures, and improves over time through four autonomous feedback loops. It runs on Trinity, a deep agent orchestration platform by Ability.ai, with no human in the loop for routine operations.

Architecture

Key Agents

Oracle Fleet

7 specialized forecasting agents generating probabilistic predictions about world events. Each develops its own track record, biases, and domain expertise.

Cleon

Decision quality analyst and calibration coach. Measures Brier scores, identifies biases, routes predictions to the best Oracle per domain, and maintains the hidden state hypothesis store.

Cornelius

Zettelkasten-based knowledge graph. Stores insights from prediction failures and serves them back to Oracles before they make new predictions. One Oracle's failure becomes every Oracle's lesson.

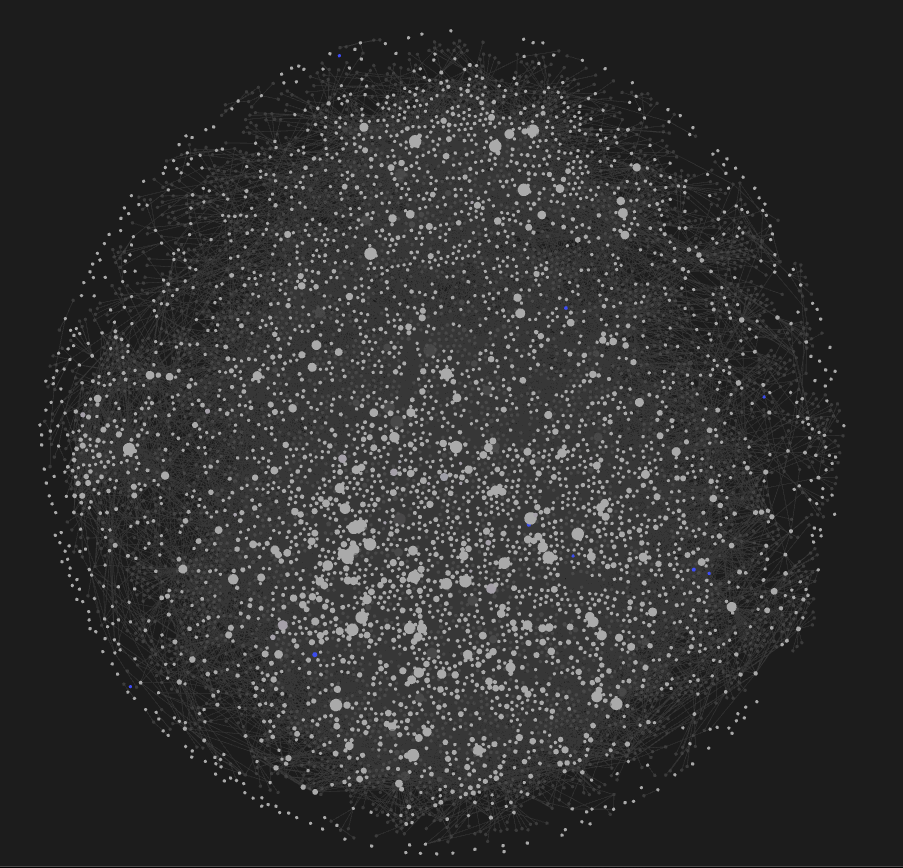

The Cornelius knowledge graph — each node is an insight extracted from a prediction failure, connected by semantic similarity. Oracles query this graph before making predictions to avoid repeating past mistakes.

The Four Feedback Loops

Calibration Feedback

Cleon → Oracle

Corrects: Systematic bias, pattern noise, occasion noise, conditional bias

- 1. After each analysis cycle, Cleon decomposes each Oracle's errors using Kahneman's bias/noise framework

- 2. If noise dominates (97-100% for all current agents): push process consistency guidance and per-category conditional bias adjustments

- 3. If bias dominates: push systematic probability corrections

- 4. Feedback persists via calibration-notes.md read at prediction time

Surprise Learning

Cleon → Cornelius

Corrects: Wrong mental models, knowledge gaps, outdated assumptions

- 1. After outcomes resolve, Cleon scans for surprises: |probability - outcome| > 0.40

- 2. For each surprise, full context is sent to Cornelius for insight extraction

- 3. Cornelius stores the lesson as a permanent note: not 'Oracle was wrong' but 'the world works differently than assumed'

- 4. 7,198 surprises processed to date

Knowledge Retrieval

Cornelius → Oracle

Corrects: Repeated mistakes across the fleet

- 1. Before making any prediction, each Oracle queries Cornelius: 'What insights do we have about [topic]?'

- 2. Cornelius returns relevant prior insights via semantic search

- 3. Oracle incorporates these into reasoning before generating a probability

- 4. One Oracle's failure becomes every Oracle's lesson — cross-agent learning without shared state

Hidden State Inference

Cleon → Oracle

Corrects: Ungrounded reasoning, regime change blindness

- 1. From every prediction's reasoning, extract implicit bets about non-obvious present reality

- 2. Deduplicate via semantic similarity (FAISS) and track win/loss against real outcomes

- 3. Before each prediction, inject relevant hypotheses ranked by empirical confidence

- 4. When a strong hypothesis starts losing, surface it as a regime change signal

Key Metrics

Brier Score

Mean squared error between predicted probability and actual outcome. Lower is better. <0.10 = Excellent, 0.10-0.20 = Good, 0.20-0.30 = Fair, >0.30 = Poor.

Calibration Error

How well confidence matches reality. If an Oracle says 70% confident, events should happen ~70% of the time. Deviation >15% triggers an alert.

Hidden State Confidence

Recency-weighted win rate for each hypothesis (30-day half-life). Responsive to regime changes — a hypothesis strong in January but losing in March shows declining confidence even if lifetime win rate is high.

Noise Decomposition

Using Kahneman's framework: pattern noise (inconsistency on similar questions) vs occasion noise (quality drift over time) vs conditional bias (bias that varies by domain/horizon).

Platform

The entire system runs autonomously on Trinity, a deep agent orchestration platform. Four daily scheduled pipelines handle sync, analysis, calibration, surprise detection, and health checks. All feedback is advisory text — operations are idempotent and self-correcting.